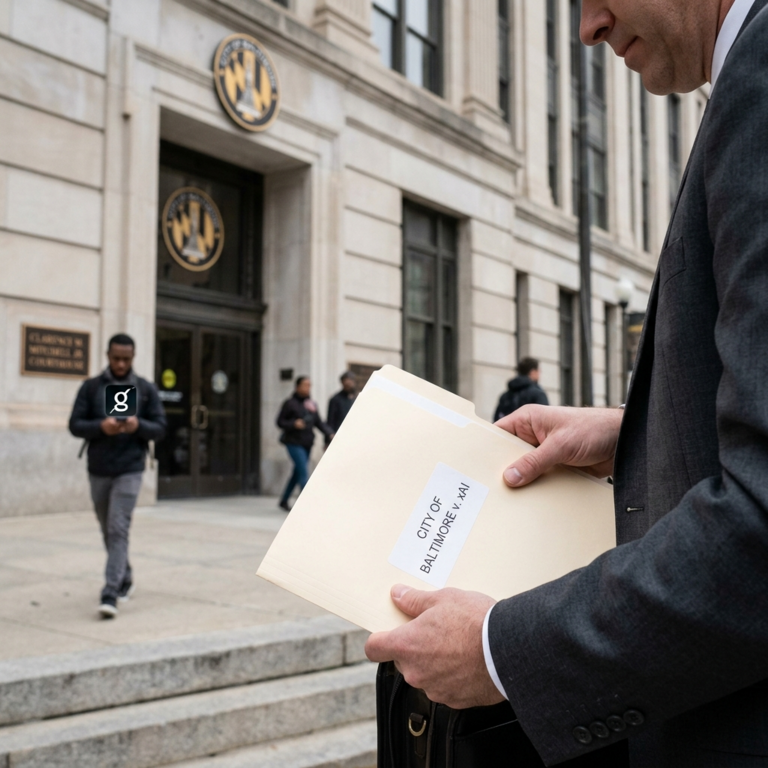

The city of Baltimore has filed a lawsuit against xAI, formerly known as Twitter, over allegations concerning the misuse of its AI chatbot, Grok. The lawsuit claims that xAI failed to disclose the risks associated with Grok’s image generation tool, leading to potential harm for users on the Grok platform and the X social network. Baltimore argues that xAI violated the city’s Consumer Protection Ordinance by not implementing sufficient safeguards to prevent the creation and spread of deepfake content. This legal action marks a significant development in the ongoing debate surrounding AI ethics and the responsibility of tech companies in protecting consumers from potential harm.

Deepfake technology has increasingly become a concern for regulators and the public due to its potential for misuse, particularly in creating non-consensual and malicious content. The lawsuit against xAI highlights the challenges faced by companies in balancing innovation with ethical considerations. While AI chatbots like Grok have the potential to revolutionize communication and customer service, they also pose risks in enabling the creation of deceptive and harmful content. The case brings to light the need for clear guidelines and regulations to govern the use of AI tools, especially in sensitive areas such as image manipulation and deepfake generation.

The legal action taken by Baltimore against xAI sets a precedent for holding tech companies accountable for the impact of their AI products on users and society. By alleging that xAI failed to implement ‘meaningful guardrails’ for Grok, the city is seeking to address the potential harms caused by deepfake technology. This lawsuit could lead to increased scrutiny and regulation of AI-powered platforms, forcing companies to prioritize user safety and transparency in their product development and marketing strategies.

The Grok deepfake controversy also raises questions about the responsibility of social media platforms in combating the spread of harmful content. As AI tools like Grok become more accessible to the general public, the risk of misuse and abuse increases, posing challenges for platforms in detecting and removing malicious content. The lawsuit against xAI underscores the need for collaboration between tech companies, regulators, and law enforcement agencies to address the growing threat of deepfake technology and protect users from potential harm.

In response to the lawsuit, xAI has defended its practices, stating that it takes the allegations seriously and is committed to addressing the concerns raised by Baltimore. The company has emphasized its commitment to responsible AI development and has pledged to work with regulators to ensure compliance with applicable laws and regulations. xAI’s response reflects a growing awareness among tech companies of the need to prioritize ethics and user safety in the design and deployment of AI technologies.

Overall, the Baltimore lawsuit against xAI over the Grok deepfake controversy signals a turning point in the public discourse on AI ethics and the regulation of AI-powered platforms. As deepfake technology continues to evolve and proliferate, the need for clear guidelines and accountability mechanisms becomes more urgent. This case serves as a wake-up call for tech companies to prioritize user protection and ethical considerations in their AI development processes, ultimately shaping the future of AI innovation and its impact on society.