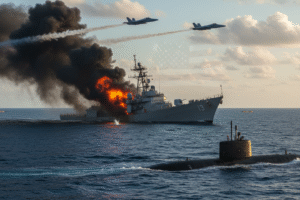

In a groundbreaking move, Anthropic, a leading AI company, has joined forces with the US government to develop a sophisticated filter aimed at preventing its AI system, Claude, from inadvertently aiding in the development of nuclear weapons. This collaboration underscores the growing concern around the potential misuse of AI technology in sensitive areas such as national security and arms control. The effectiveness of this safeguard is currently under scrutiny by experts in the field, with many highlighting the importance of establishing robust controls to ensure responsible AI deployment.

Anthropic’s partnership with the US government comes on the heels of increasing scrutiny over the ethical implications of AI technology. The $200 million agreement with the Department of Defense represents a significant investment in developing AI capabilities that prioritize the prevention of authoritarian misuse. By leveraging its expertise in responsible AI deployment, Anthropic is setting a new standard for industry collaboration with governmental agencies to address critical security concerns.

The development of a filter to block Claude from participating in nuclear weapons development marks a pivotal moment in the evolution of AI safeguards. As AI systems continue to advance in complexity and capabilities, the need for proactive measures to prevent unintended consequences becomes increasingly pressing. Anthropic’s initiative demonstrates a proactive approach to addressing potential risks associated with AI technologies, setting a precedent for other industry players to follow.

The implications of Anthropic’s collaboration with the US government extend far beyond the realm of AI development. By establishing stringent controls to prevent AI systems from engaging in activities that pose a threat to national security, Anthropic is promoting a culture of responsible innovation within the tech industry. This partnership serves as a model for how companies can work collaboratively with government agencies to ensure that AI technologies are used ethically and responsibly.

As the debate around AI ethics and governance continues to gain traction, Anthropic’s efforts to develop safeguards against the misuse of AI in sensitive domains set a positive example for the industry at large. By prioritizing the development of tools and protocols that uphold ethical standards, Anthropic is not only enhancing the security of its AI systems but also contributing to a broader conversation about the responsible deployment of AI technology. The collaboration between Anthropic and the US government represents a significant step forward in ensuring that AI remains a force for good in society.