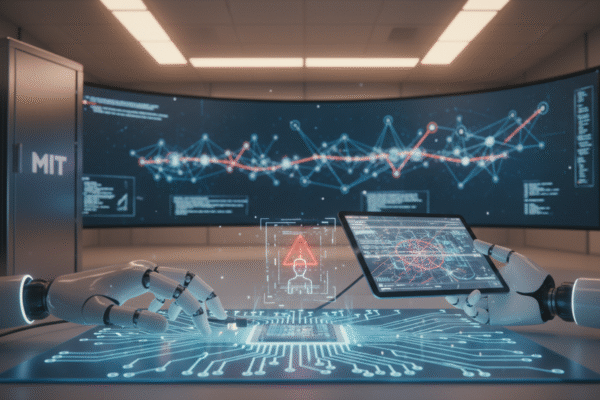

MIT study reveals AI agents lack safety testing and shutdown protocols, posing risks for users

A study by MIT and collaborators has uncovered that the majority of AI systems do not disclose safety testing information and lack protocols to shut down rogue bots. This poses significant risks for users and industries reliant on AI technology.