In a move to protect minors from potential harm caused by AI chatbots, the bipartisan GUARD Act has been introduced, aiming to impose age restrictions on these digital entities. The legislation requires AI companies to implement age verification measures, privacy protections, and clear disclosures that the chatbot is not a human. This comes in response to lawsuits alleging that AI chatbots have led to tragic outcomes for some young users, prompting a need for stricter regulations in the digital space.

The GUARD Act underscores the growing concern over the ethical implications of AI technology, especially when it comes to vulnerable populations like children. By holding AI companies accountable for ensuring their chatbots are not accessible to minors, the bill seeks to address the potential risks of exploitation and manipulation that can arise from interactions with these virtual entities. This legislation signals a shift towards greater responsibility and transparency in the development and deployment of AI-powered tools.

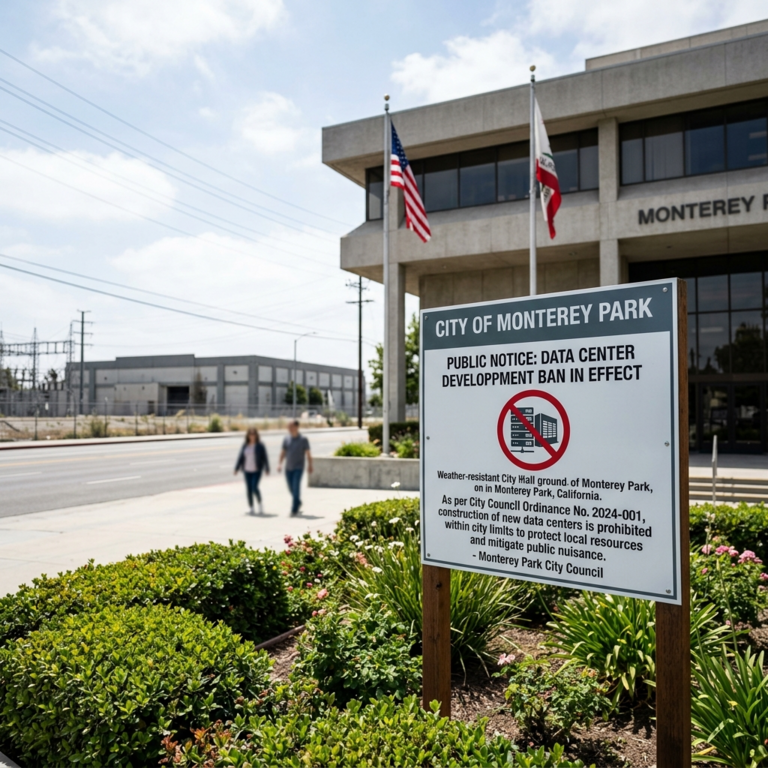

The proposed age restrictions on AI chatbots represent a significant step towards enhancing child safety online and promoting responsible AI use. With the increasing integration of AI technologies in various aspects of everyday life, it is crucial to establish safeguards that protect users, particularly minors, from potential harm or exploitation. By setting clear guidelines for AI companies and platforms, the GUARD Act aims to create a safer digital environment for young people to engage with emerging technologies.

The need for age restrictions on AI chatbots reflects a broader conversation about the ethical considerations surrounding AI development and deployment. As AI continues to evolve and become more pervasive in society, questions about accountability, transparency, and user protection have come to the forefront. The GUARD Act serves as a proactive measure to address these concerns and ensure that AI technologies are used responsibly and ethically, especially when it comes to safeguarding the well-being of children.

Overall, the introduction of the GUARD Act highlights the importance of prioritizing user safety and well-being in the design and implementation of AI systems. By proposing age restrictions on AI chatbots, lawmakers are taking a proactive stance in addressing potential risks and ensuring that minors are protected from harmful interactions with artificial entities. As technology continues to advance, it is crucial to balance innovation with ethical considerations, particularly when it comes to safeguarding the most vulnerable members of society.