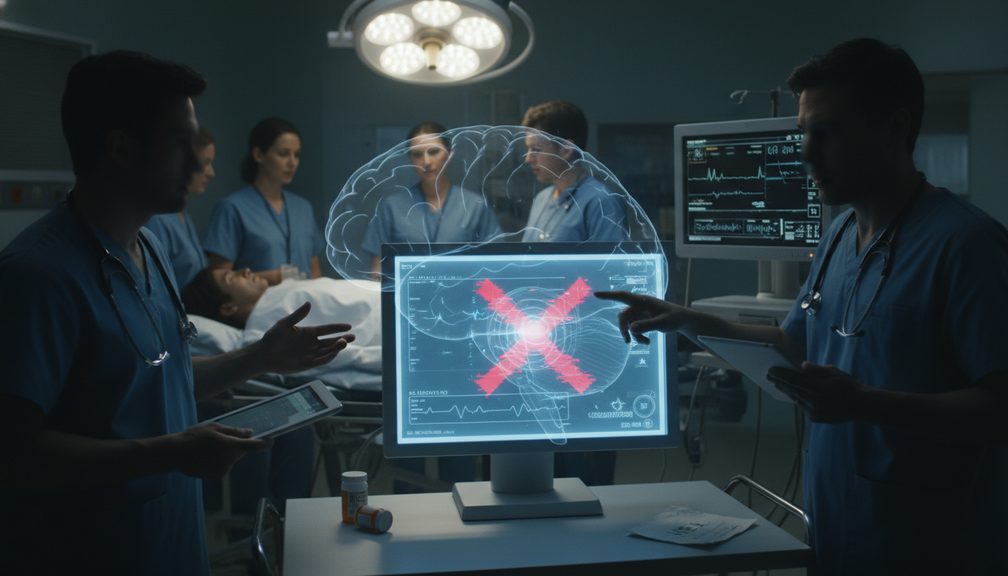

As the debate over the role of artificial intelligence in healthcare intensifies, recent concerns about ChatGPT Health’s flaws in recognizing medical emergencies have reignited discussions on the limitations of technology. The study revealing the AI platform’s shortcomings in identifying urgent care needs and suicidal ideation underscores the inherent risks of over-reliance on algorithms in critical healthcare decision-making. While AI undoubtedly offers valuable tools for medical professionals, the human element of judgment, empathy, and intuition must not be discounted. The essence of healthcare lies in the compassionate understanding of patients’ unique circumstances and needs, a realm where machines, no matter how advanced, may fall short.

The epidemic of loneliness and isolation, exacerbated by technological advancements and societal changes, further underscores the irreplaceable value of human connection and support in addressing mental health challenges. As individuals grapple with feelings of disconnection and alienation, the need for genuine human interaction, empathy, and understanding becomes increasingly paramount. While AI-driven solutions like ChatGPT Therapy may offer some benefits, they cannot fully replicate the depth of human connection and therapeutic rapport essential for effective mental health treatment. Recognizing and addressing these profound human needs requires a holistic approach that embraces the role of community, relationships, and shared experiences in fostering emotional well-being.

In the realm of healthcare, as in economics and governance, the principles of conservatism emphasize the importance of individual responsibility and human agency. While technological advancements hold promise for enhancing efficiency and expanding access to care, they must be balanced with a recognition of the inherent limitations of AI in addressing complex human emotions and experiences. The conservative worldview values the autonomy and dignity of each individual, advocating for a society where self-reliant citizens take charge of their well-being and contribute to the common good. Embracing a nuanced approach that integrates both technological innovation and human-centered care is essential for safeguarding the holistic health and flourishing of individuals and communities.

Moreover, the recent revelations about ChatGPT Health’s deficiencies underscore the need for prudent oversight and regulation to ensure the ethical and responsible use of AI in healthcare. As we navigate the evolving landscape of digital health solutions, it is crucial to uphold rigorous standards that prioritize patient safety, privacy, and informed consent. The conservative ethos of prudence and skepticism towards unchecked technological advancement aligns with the imperative of safeguarding the integrity and ethical practice of medicine in the face of rapid innovation.

Ultimately, the intersection of technology and healthcare presents a complex terrain where the values of conservatism, rooted in tradition, community, and human dignity, offer essential guidance. By recognizing the complementarity of AI-driven tools and human-centered care, we can forge a path that harnesses the benefits of innovation while preserving the irreplaceable elements of human connection and compassion in healing. As we confront the challenges of mental health and well-being in an increasingly digitized world, let us uphold the timeless values of personal responsibility, community support, and ethical governance that form the bedrock of conservative thought and practice.